For decades, industrial robots have been powerful but rigid—they follow pre-programmed instructions and break when the world deviates from what engineers anticipated. Google DeepMind's Gemini Robotics-ER 1.6, released on April 14, 2026, represents the sharpest version yet of a new approach: giving robots the same kind of flexible reasoning that powers chatbots like Gemini, but aimed at understanding and acting in physical space. The model can now read pressure gauges, thermometers, and digital readouts with 98% accuracy when paired with its agentic vision system—a capability that emerged directly from collaboration with Boston Dynamics, whose Spot robot patrols industrial facilities.

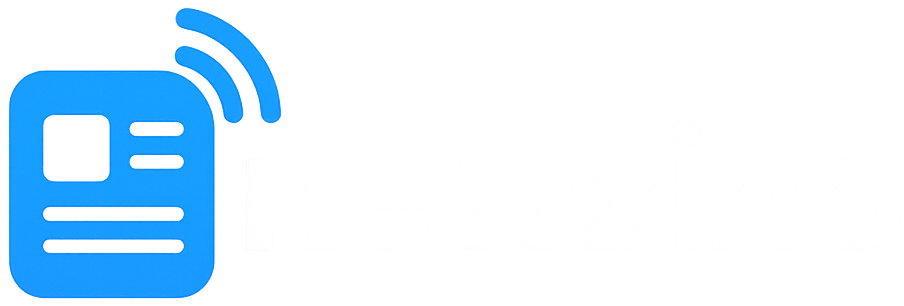

This release is one move in a broader race to build what the industry calls "physical AI"—foundation models that let any robot understand novel environments without being explicitly programmed for them. Google DeepMind, NVIDIA, and a growing roster of Chinese manufacturers are all converging on the same bet: that the architectures behind large language models can be adapted to give robots general-purpose intelligence. The stakes are enormous. The humanoid robot market is projected to grow from $2 billion in 2024 to over $13 billion by 2029, and the companies that control the underlying AI models will shape which robots can do what.